Autonomous Vehicles & their Levels of Automation

What decision does a self-driving car take in case of imminent, fatal accident? What happens in case of data poisoning if someone hacked the system and hijacked your car? And moreover, do you trust the technology enough to buy a self-driving car to begin with?

All these questions come up every single time at the dinner table after your grandfather asks about your work at Microsoft. The trolley dilemma for autonomous vehicles (AVs) seems to be the favourite debating topic of your grandfather ever since you bought your Tesla. Well that, and also making you second-guess your career choices after every Shabbat dinner.

However, even if you do not work at Microsoft, you drive any other car or take the train back to your grandparents’ home… Even if you’re not Jewish and have grandparents who talk about gardening and holidays in Biarritz instead, you were probably alarmed at least once in your life by ethical decision-making in AI. Or at least at the thought of giving AI more power of application in a world that is very experimental and does not have a strong regulatory framework to keep up the pace with technological progress. But I digress…

So how much decision-making power do self-driving cars have anyway and how & when does human intervention come into play?

In this two part article, we will explore what are autonomous vehicles, what are their levels of automation and how will they minimize human-error accidents. We will also go over the risks of deploying this type of technology, the ethical implications, some of the current findings and more importantly, what can go super wrong.

We will address some open questions related to the different levels of automation and immerse ourselves into an ethical design approach as a potential solution to everything we’re about to cover so far.

Autonomous Vehicles (AVs) & their Levels of Automation

The starting point of this conversation would be what is actually considered a self-driving car? What makes a vehicle autonomous and what type of autonomy are we talking about?

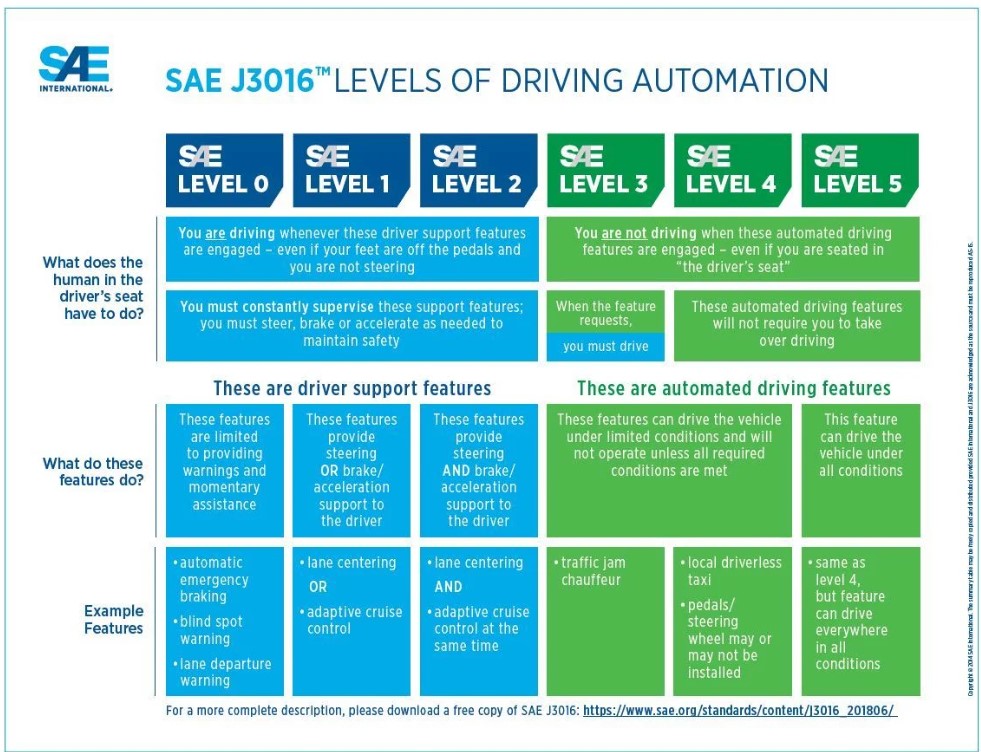

There are many opinions cruising the internetsphere related to AVs. However, the AV automation levels were illustrated in the published standard of the Society of Automotive Engineers (SEA). They start from Level 0 (the least amount of automation – no automation) to Level 5 (the most amount of automation). This is what they entail:

| Level 0 | No Driving Automation |

| Level 1 | Driver Assistance |

| Level 2 | Partial Driving Automation |

| Level 3 | Conditional Driving Automation |

| Level 4 | High Driving Automation |

| Level 5 | Full Driving Automation |

Unpacking the Levels

Photo Credit: SAE International

Photo Credit: SAE International

Level 0 is manually controlled. It does not have driving automation. The warning assistance and automatic emergency breaking are not considered driving functions, hence they do not qualify as automation.

In Levels 1 – 3 , AVs are semi-autonomous. Driving is co-shared, but the driver would need to be engaged in the task of driving at all times, despite the amounts of automation or the new features the level will be enhanced by. The current features include lane centering, steering or / and adaptive cruise control (depending on the level – see image above) and traffic jam assist (level 3).

The more exciting levels of automation are Levels 4 & 5, where the vehicle does no longer require the driver to be involved in the driving task(!). Driving is no longer co-shared. The entire configuration of the car may change. Pedals may not be needed. The steering wheel may even disappear! We may have a different seat arrangement if the rights to drive will be fully taken away.

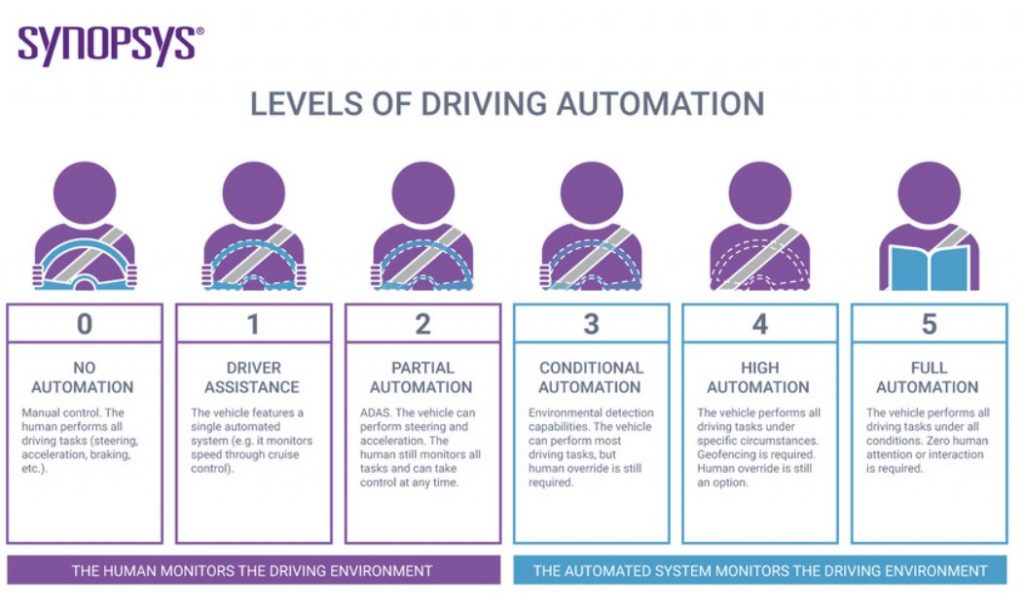

But before we get there, let’s explore the difference between these last two levels of automation. In level 4, the AV would be drivable only within its scope, aka Operational Design Domain (ODD).

But what does that mean?

If the AV scope is a specific location, the car will be location-bound, so it won’t work outside of the specific perimeter. If the car’s scope is Paris, it won’t work in the South of France. If its scope is driving only under specific weather conditions, or only during daytime/ night-time etc., the car will abide by the Operational Design Domain and drive only during non-rainy days for instance or only within the specific time frame. The ODD has not (yet) been standardized; thus the rules will be established by each automaker.

Conversely, in Level 5, the car won’t be conditioned by scope, but it is limited to what it is considered humanly drivable. Off-roading does not apply for any level of automation.

Photo Credit: Synopsis

Photo Credit: Synopsis

Do We Have a Reason to Worry about AVs?

It is important to mention that up to this day, we do not have fully autonomous cars. According to an Accenture report, the first 100% fully AV is estimated to be ready post 2030. Despite that, what we CAN worry about is algorithmic morality, bias and potentially having released hundreds of thousands of AVs on the market without having gotten the memo about ethical design.

Unfortunately, this may be likely to occur since techmakers seem to be in a continuous Nascar race for releasing the next model of AV rather than having ensured security and safety by design before the launch.

Even if self-driving cars have the potential of minimizing avoidable crashes by 90% according to McKinsey, accidents are still imminent, especially in the first phases of the technology deployed in real-world situations. As of May 15, 2022, 392 crashes of Level 2 Advanced Driver Assistance Systems (ADAS) were reported by the National Highway Traffic Safety Administration (NHTSA). The majority was reported by Tesla: 273 crashes. 90 crashes were reported by Honda, 10 by Subaru and the few remaining by other car manufacturers. It is important to note that most of the crashes were only fender benders. 6 were fatal and 5 were serious.

In the second part of the article, we shall dive into some of the findings related to risks & ethical implications of self-driving cars. We will address some open questions related to the different levels of automation and immerse ourselves into an ethical design approach as a potential solution.

Featured Photo Credits: Brecht Denil