What is Open Ethics Vector

Why do we need an Open Ethics Vector

#AIFORGOOD… But who decides what’s “good” and what’s not?

What we know is that different communities can have different cultural codes. Indeed, culture is defined by our set of values, guiding behaviors and attitudes towards religion, gender, relationships, money, food, or health. These sets differ from one society to another. Can these values be aligned between AI systems and the users that belong to a specific culture? Instead of debating about what’s good, in Open Ethics initiative we start from the basic principles.

A set of such principles defines an Open Ethics Vector (OEV) which reflects the values about how data-driven decisions are made. Disclosing these value sets, making them open, can help users to learn which apps are best for them.

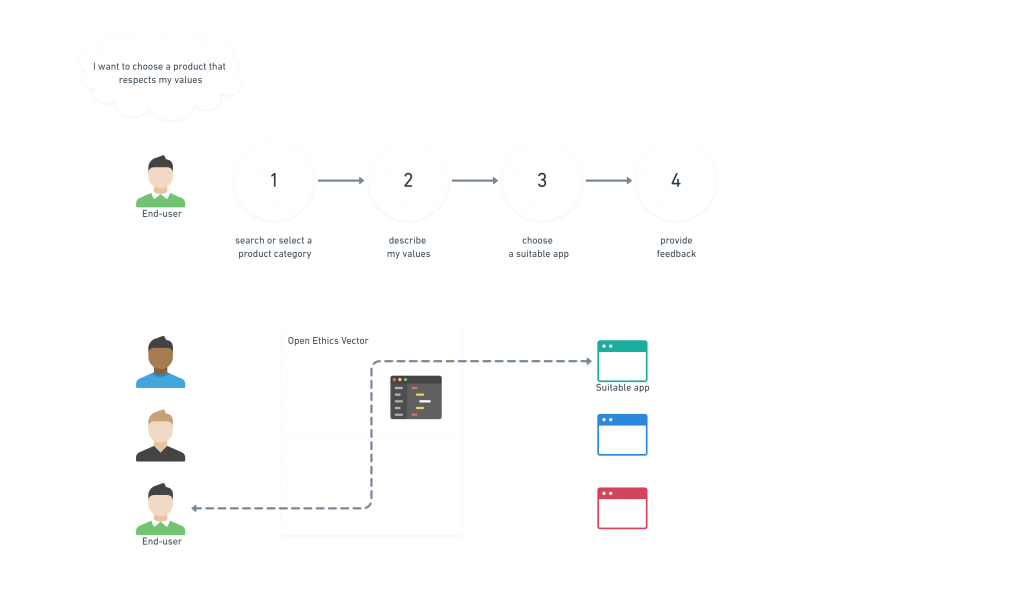

Incorporating scaling to mark the extent to which every “value” is staisfied, will help end-users to choose relevant products based on their ethical vectors.

Content

How does Open Ethics Vector work: use-cases

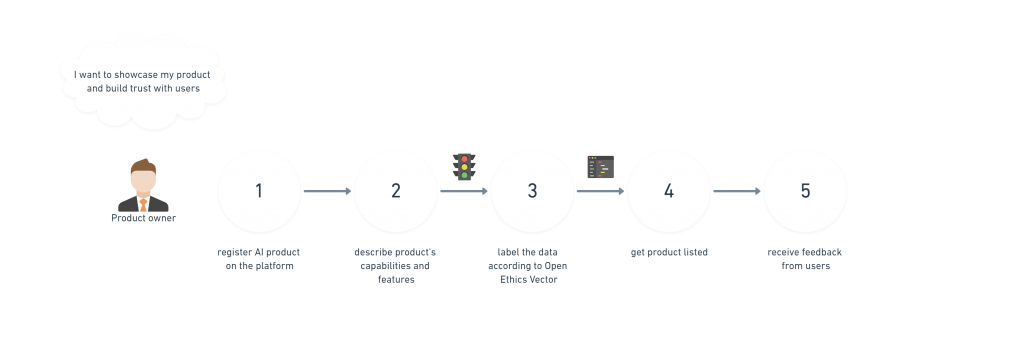

AI product owners get their products listed on the platform and are able to showcase their algorithmic decision-making approaches.

End-users can choose the AI solution based on their personal “vector of preferences”.

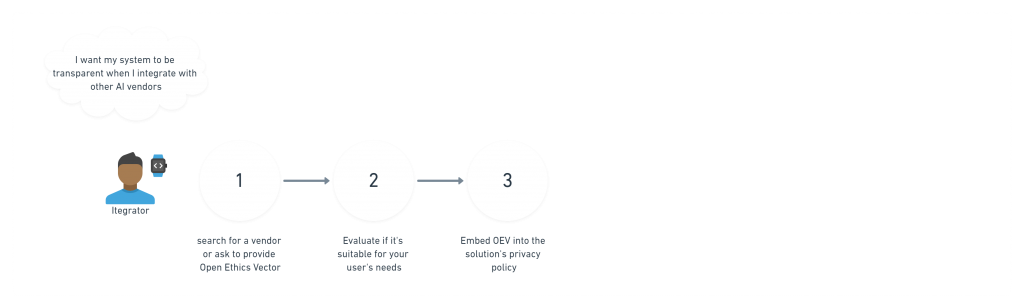

Solution providers/integrators can seamlessly access information about their vendor’s AI products.

The vision for the Open Ethics Vector